Deleted

Deleted Member

Posts: 0

|

Post by Deleted on Apr 5, 2013 0:45:52 GMT -5

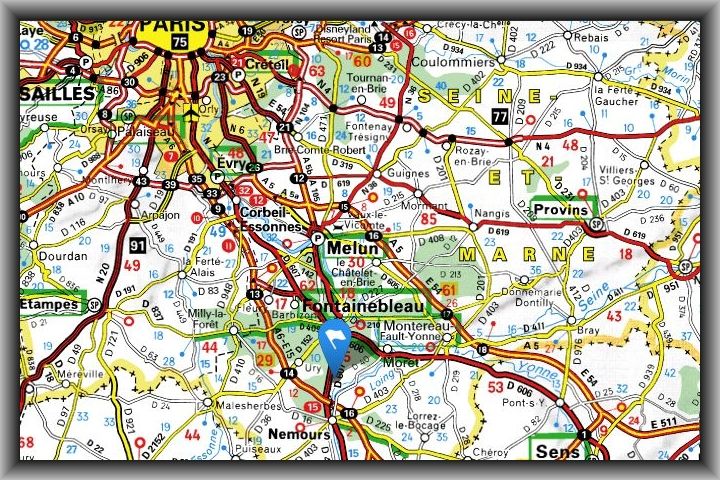

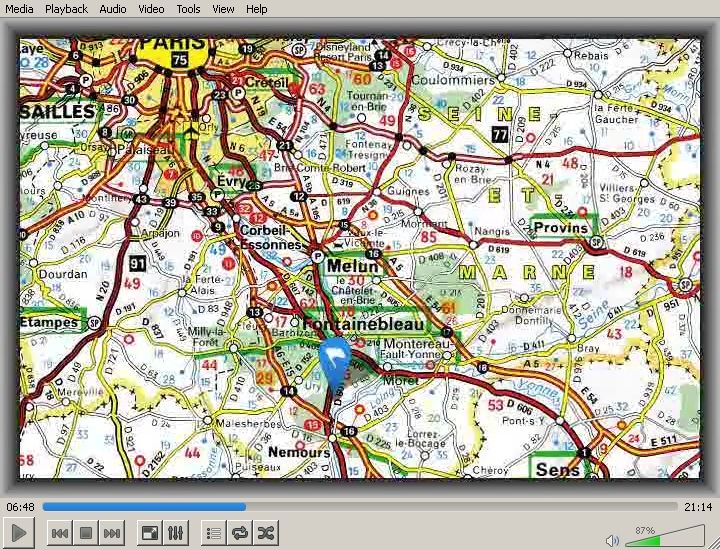

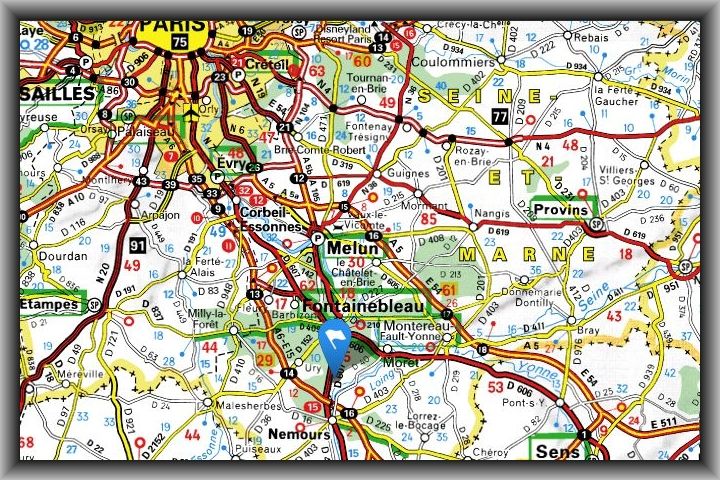

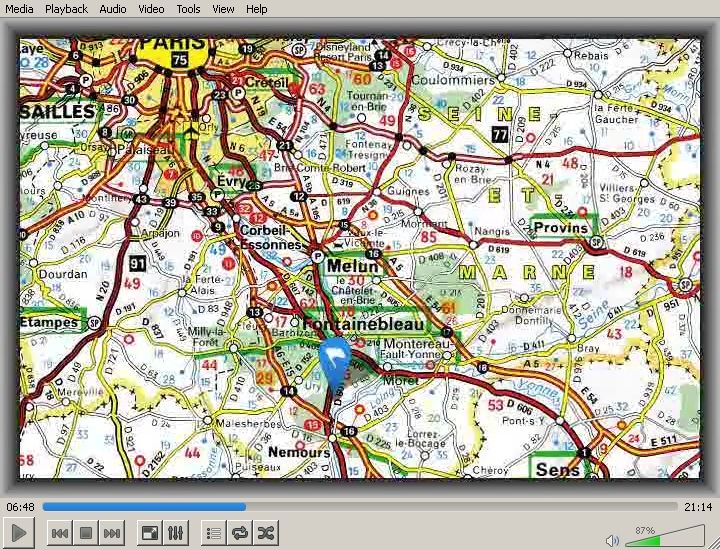

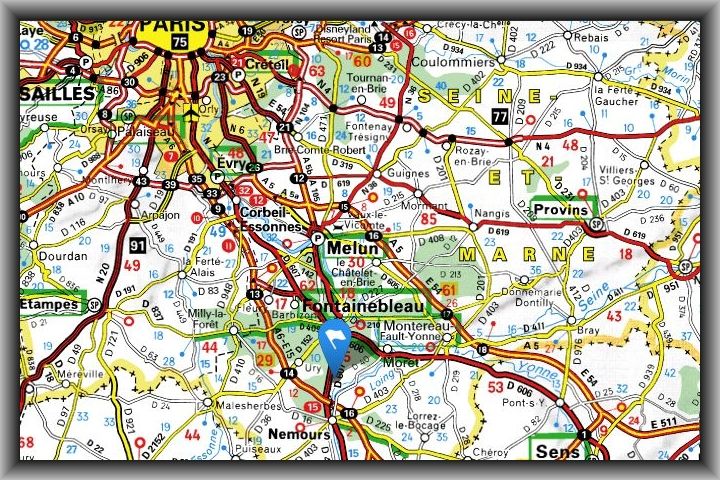

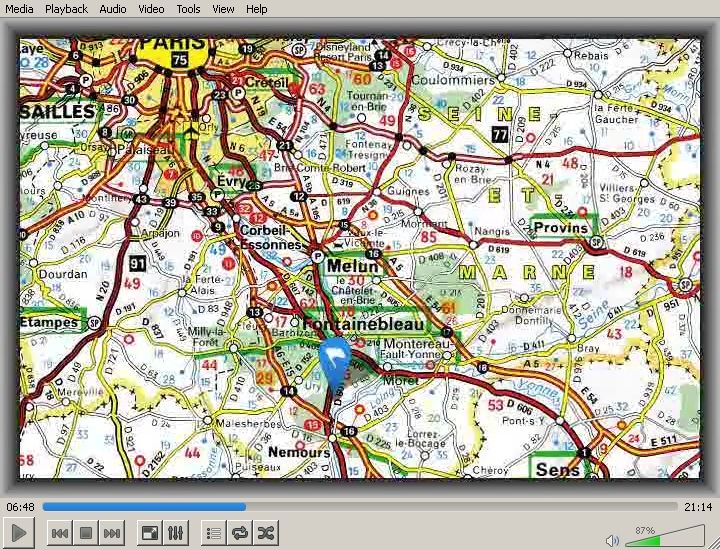

It is the human condition is it not? And indeed, the next item in the Third Programme's repertoire is nearly ready, but over the past day or two I have struck this unexpected problem. Here is the original image (which should be displayed unchanged for about a minute in the video):  And here is a screen-shot of how it actually looks in the video:  It will be evident that the quality of the image has deteriorated considerably - compare for instance the green patch underneath Coulommiers - and what, if possible, is worse is that throughout the period of its display, little artefacts wriggle to and fro. In short, unacceptable. Until now I have made a separate video file for each "slide" or page of music, and after that have attempted to conjoin or concatenate them. Certain format conversions have been necessary, and that is where this loss of quality has occurred. So yesterday I tried all kinds of things, and have now found that a simple single "mov" file affords by far the best quality. But there is no way to concatenate mov files in a lossless manner (unless by reading up on the format myself and programming something - but that will take longer and is something for the future). So I have concluded that the best approach for the present is to prepare a single file in "mov" format, containing all the images, copied as many times as there are frames. The frame rate can be very low, say four per second. Good quality audio at an average of 425 kilobits per second can be merged in, and the resulting programme itself can remain as a "mov" file. All this I have tested, and the task that remains is to prepare - by copying and renaming - all the necessary images. This will necessitate a further delay, but only of a day or so. |

|

Deleted

Deleted Member

Posts: 0

|

Post by Deleted on Apr 5, 2013 2:36:00 GMT -5

Good morning, Sydney Grew! I trust that all is well with you this Easter! If I may address your question below directly: "It is the human condition is it not?" On the contrary, I would argue that this is the non-human condition. The little wriggly thing above is no more than a computer-generated image! As it happens, I am familiar with this part of France, as I have good friends who live near Paris. The green patch represents the Parc des Félins, a unique zoological park located in the Seine-et-Marne region of France in a magnificent oak woodland estate just to the south of Coulommiers. The Parc des Félins is a breeding and conservation centre predominantly devoted to the cat family, from the smallest species (Sand Cats, Rusty-Spotted Cats, Margays etc.) to the largest (Tigers, Lions, Leopards etc.). Parc des FélinsI would also recommend a visit to Vaux le Vicomte and Fontainebleau, a little further south.  With over 1500 rooms at the heart of 130 acres of parkland and gardens, Fontainebleau is the only royal and imperial château to have been continuously inhabited for seven centuries. A visit to Fontainebleau opens up an unparalleled view of French history, art history and architecture. I commend it to everyone reading ' The Third'. |

|

Deleted

Deleted Member

Posts: 0

|

Post by Deleted on Apr 6, 2013 0:31:53 GMT -5

After changing the type of the intermediate files from "mpg" to "vob," I discovered that the video quality had improved somewhat, to the point that now it has been possible to make the programme available. But I would really like to use intermediate files of the type "mov"; they appear to offer lossless quality. The problem there is that of the many methods of joining "mov" files I have tried none has proven satisfactory. I don't see why it should not be possible, though, so I am going to attempt to code it up myself.

Programme four, Alexandroff's Fourth Sonata, is nearing completion.

|

|

Deleted

Deleted Member

Posts: 0

|

Post by Deleted on Apr 8, 2013 11:41:09 GMT -5

After investigating dozens of different methods, none of which worked, I have finally found a simple, straightforward and open way to join up a number of parts to make a video without losing visual quality. It is:

1) For each of the eighteen or so separate images which are to go into the video, generate an intermediate file as raw unformatted data. Such files are given the extension "yuv" and are enormous. I was obliged to use a portable hard drive.

2) Use a simple "copy" command to concatenate them all, which results in a single even more enormous file - sixteen gigabytes!

3) Use ffmpeg to convert the single enormous yuv file, together with the sound file, into a much more reasonably-sized combined mp4 file. The audio track thereof occupies 57 megabytes, and the video track represents a reduction of sixteen gigabytes down to only 9 megabytes, but affords - finally - excellent quality. (Total file size 66 megabytes.)

Here is the technical procedure, the detail of which may interest some readers:

1) Generate an intermediate yuv file for each image (they are all 720 by 480 pixels here):

The -t arguments are the number of seconds for which each image is to be displayed. The enormous yuv files contain repetitive data, and their size could be much reduced; but as I have a fast usb connection, and they are only temporary, it is not worth bothering with.

2) Concatenate all eighteen intermediate files:

3) Generate the final output from the two input files "enormous.yuv" (for the video track) and "DeliusDoubConcVBR425.m4a" (for the audio). I have called the final output file "TheThird05.mp4" here. There is no need to indicate a video codec; in fact ffmpeg will object if one attempts to do so:

And I am glad to report that the little wriggly things have departed.

|

|

|

|

Post by neilmcgowan on Apr 8, 2013 13:07:24 GMT -5

And I am glad to report that the little wriggly things have departed.

Well, I understood that bit, anyhow  |

|

Deleted

Deleted Member

Posts: 0

|

Post by Deleted on May 3, 2013 9:22:32 GMT -5

I have now investigated the enormous intermediate files, mentioned above. For a sample image to be displayed for just two seconds, ffmpeg generates a YUV file with a size of 69,120,000 bytes. Upon inspection, it will be found that this file contains fifty identical sections, each having a length of 1,382,400 bytes.

The default frame rate is twenty-five per second, and so each of the fifty identical sections contains the data for one frame. This may be confirmed as follows:

According to the ffmpeg documentation, "a YUV file contains raw YUV planar data. Each frame is composed of the Y plane followed by the U and V planes at half vertical and horizontal resolution." My test image has a resolution of 1280 by 720 pixels, and so the Y plane will require 1280 x 720 = 921,600 bytes (assuming one byte per pixel), and each of the U and V planes will require 640 x 360 = 230,400 bytes. Then 921,600 + 230,400 + 230,400 = 1,382,400 which is the size of one of the above-mentioned sections.

Since the broadcasts - at least so far - have consisted of still pictures only, the amount of intermediate data for each picture can be reduced to 1,382,400 bytes (one frame) plus an integer frame count. I say "intermediate" because what must come next is the final "multiplexing" step, where ffmpeg combines the audio data with the video data. The great thing about these raw frame data is that they can simply be appended or concatenated. Thus I believe it will be possible to rig up a programme to "pipe" the combined data into ffmpeg, obviating the need for a further large file. (Indeed using a more complicated combination of pipes within pipes and multiple instances of ffmpeg the intermediate data may be able to be done away with altogether - we shall see. Something of that kind may be useful when we start to use moving images - such as Dr. Williams's Sea Symphony will surely call for.)

|

|

Deleted

Deleted Member

Posts: 0

|

Post by Deleted on May 17, 2013 7:12:19 GMT -5

I have now found out how to avoid the horrendously enormous intermediate files - 45 gigabytes - described above. Here is my latest way of making a video from slides and music:

1) Prepare the slides as jpg files. Currently their size is 1280 pixels by 720, which will occupy an average of 320,000 bytes (320 kilobytes). A half-hour "broadcast" in the current style will require about twenty-five slides. (Of course more if there is more movement!)

2) Use ffmpeg to convert each slide to yuv format, as follows:

The "-i" means "input file" and the "-y" is a mere convenience meaning "overwrite the following output file if it already exists." One line of the above type will be required for each slide. These twenty-five or so lines can be put in a batch file and their execution will be effectively instantaneous.

Each of the yuv files generated in this way will contain the data for just one frame of the video, and (for 1280 x 720 images) they will all be exactly the same size: 1,382,400 bytes (as explained in a previous post).

3) Next prepare a control file containing one line for each of the yuv files. Each line will specify a) a duration in seconds (to the nearest hundreth of a second) and b) the name of the image. Here is an example:

Note that the conversions done in step 2 above will need to be done only once for each image, whereas this control file with the timings will have to be altered many times as we try to synchronize the images precisely with the music track.

4) Now we need a tiny programme which will perform the following tasks:

a) Read in the control file one line at a time

b) Convert the duration field to a frame count. Since we use twenty-five frames per second, we simply multiply by twenty-five. It is of no consequence if the result is rounded down to the nearest hundredth of a second.

c) Read in the yuv file for the image whose name is given in the control file, and put it in an area of memory of which the size is 1,382,400 bytes. So we now have in memory the data for one frame.

d) Output the data for that single frame the number of times calculated in step b. For example, if a slide is to be displayed for a duration of ten seconds, we must output the data 10 by 25 = 250 times. Since one frame consists of 1,382,400 bytes, we must output 1,382,400 by 250 = 345,600,000 bytes for those ten seconds of video. When I say "output" I mean "take those 1,382,400 bytes (one frame) from the memory area in which they are saved, and send them to the pre-defined file named 'stdout'".

e) And naturally all the above procedure is repeated for each line of the control file.

This tiny programme may of course be written in the language of one's choice: Perl, Fortran, Assembly, or the c family. Let us name it "send_frames". A c++ example is provided below.

5) So the next and final step is to run the ffmpeg programme again. It simply accepts all that video data, uses a very small percentage of it to make our sharply focused mp4 video, and combines it with an audio file. Just one command is needed:

Note the vertical line, otherwise known as a "pipe", which sends the output from send_frames to the input of ffmpeg (specified by "-i -"). The "-f rawvideo" tells ffmpeg the format of the data; the "-s 1280x720" tells ffmpeg the size of the video frames in pixels; the second "-i" specifies the name of the input audio file ("passagVBR425.m4a"); and the phrase "-codec:a copy" tells ffmpeg to use the input audio data in its present format and not to attempt to convert the audio data to some other format. The extension ".mp4" attached to the output file name is necessary, since it tells ffmpeg which output video format to use.

This command will take only two or three minutes to execute. It will be observed that although the umpteen gigabytes of data are still being shuffled around, they are not in the end saved, hence everything transpires very much more quickly.

Here are a few lines from the "c" or "c++" programme "send_frames" which may be of use:

I tested this method for a while, and got it working. Then I used it with a different yuv file, and it stopped working! The reason, I discovered, is that Microsoft, in their attempts to "help" the user, as usual only hinder him. All the 0a characters in the frame data were being converted to the sequence 0a 0d. It is necessary to add the line:

to put a stop to that behaviour by setting stdout to binary mode. The line is not necessary under Unix.

|

|